I made this material in the picture out of dryer lint and Elmer's white glue.

It took it about a week to dry completely but once it did it is hard as a board and I can't even flex it using all my strength with my hands.

To make it take the dryer lint and tear it into as small pieces as you can and keep mixing it together until it is as uniform as you can get it, also remove any pieces of any sort of non dryer lint particles that might be in there. Then gradually mix in the glue (I used Elmer's but I might even try some kind of epoxy next time). Knead it like dough, it will absorb a ton of glue, like maybe half of a good size bottle. I'm not sure on the exact amount I kind of had to guess. Then set it out in the sun for about a week, for the first few days it will feel like it gives when you press it, but forms a hard crust on the outside, eventually it will dry all the way through and be hard as a board and not give at all no matter how hard you press.

I had the idea based on how they make carbon fiber but this uses cotton fiber that's been processed in the dryer, which I think everyone throws away anyway. I think the real useful property of this material is that because it's made out of cotton fiber it won't conduct heat! Like imagine an inch think solid sweater how warm that would be. So my first thought would be as a possible replacement for drywall, that is stronger and doesn't conduct heat to better insulate a house. But also it could be fun to make sculptures or anything else out of it.

A couple more things, I think it would be fun and safe for kids to use (when you are kneading it is really gushy I think they would like that), and I noticed that it is naturally buoyant, the sample in the picture floats half out of a glass of water longways. I believe that it would also be pretty soundproof, but they would probably have to mix in a flame retardant before they use it in structures.

analytics

Friday, April 26, 2013

Thursday, April 11, 2013

Payouts in a tournament paying a certain number of places

Suppose you have a tournament and you have a certain amount of money pooled together and you want to pay a certain number of places. I found this fair way to figure the payouts. Consider the Golden Spiral:

Now consider this payout structure:

See the payouts follow the Golden spiral so you follow the spiral until you have the desired number of rectangles one for each person getting the payout..

Then you can use this to figure the actual amounts (this is for 3 places):

For a million dollars paying out to 5 places it looks like:

The general formula is:

Where total is the amount of money pooled and n is the number of places to pay out. Instead of 1.618 you can use more accurate values of the golden ratio but you only need to if the number pooled is quite large.

Or you can use different ratios, but the golden ratio has the nice property that the amount everyone gets is as much as the next two finishers combined.

Sunday, April 7, 2013

Recursive metaprogramming

I was thinking if you have an interpreter that takes a program listing like this:

a(b(c,d,e), f("foo.txt", "sample.png", 1))

But a,b,c,d,e, and f are all stand-alone programs. They can execute by themselves taking certain numbers of arguments and returning something, but this interpreter I'm thinking of can recurse through this structure and feed the outputs from each program to an enclosing program.

For example in the above, f could be a program that writes the text in the first argument on the picture in the second argument if the third argument is 1. c,d, and e could be programs that output images, and b makes a composite image from them. Then A is a program that puts the result of all of those in one big composite picture, displays it and plays a song.

Anyway the idea is that using this interpreted language you could break a big program into a lot of small programs that the operating system can schedule and use all of the available processors of your computer. If instead you made one big program out of each of these small programs as say, functions inside the program, you would have to do a lot of work programming how to distribute the workload across all the cores or how to do the threads and all of that stuff is hard to program. But the operating system already does a good job of this for multitasking the various programs you might have running on your computer, so you just let it do the hard part.

The one I showed was just a one line interpreted program of programs but the language could have a lot more features.

a(b(c,d,e), f("foo.txt", "sample.png", 1))

But a,b,c,d,e, and f are all stand-alone programs. They can execute by themselves taking certain numbers of arguments and returning something, but this interpreter I'm thinking of can recurse through this structure and feed the outputs from each program to an enclosing program.

For example in the above, f could be a program that writes the text in the first argument on the picture in the second argument if the third argument is 1. c,d, and e could be programs that output images, and b makes a composite image from them. Then A is a program that puts the result of all of those in one big composite picture, displays it and plays a song.

Anyway the idea is that using this interpreted language you could break a big program into a lot of small programs that the operating system can schedule and use all of the available processors of your computer. If instead you made one big program out of each of these small programs as say, functions inside the program, you would have to do a lot of work programming how to distribute the workload across all the cores or how to do the threads and all of that stuff is hard to program. But the operating system already does a good job of this for multitasking the various programs you might have running on your computer, so you just let it do the hard part.

The one I showed was just a one line interpreted program of programs but the language could have a lot more features.

Parallelizing through a meta programming language

The big issue in computing today is how to write programs so the things that can happen in parralel are executed at the same time and solving the problem of what parts of the program are dependent on which other parts to take advantage of today's computers with their multiple processors.

The way I am thinking of to approach it is to make a meta programming language that takes individual simple programs and has ways of telling the computer which things can be done in parallel and what parts of the meta program depend on the other parts. Here's a diagram:

This meta program consists of 5 smaller programs that are ordered in columns from left to right. Every column further to the right depends on results from one or more programs from a column to its left. Each of these programs has a list of things that it needs to do and the metaprogram handles communication between them. The parralization comes in for instance in function f at the top left. Because it's to do list is an unordered list, the metaprogram will know that all of the things on its to do list can happen at the same time, so it will run in this case 3 simultaneous copies of f doing each of the things on its list. And also anything else in the first column can also happen at the same because programs in that column don't have dependencies as there is nothing further to the left. But h in the bottom left unlike f has an ordered list so those things have to happen sequentially.

G is an indexed function which consists of multiple copies of the same program waiting on different inputs from the multiple copies of f that run. And j is an example of a program that depends on everything to the left of it and is supposed to sequentially reply back to h to get the next input. The metaprogram would keep track of when j's inputs have all been satisfied and it can be run. This would happen when every copy of g finishes and it's received an input from h.

The metaprogram could potentially run many times faster than a regular program run sequentially step by step depending on how many processors the system has.

So now the thing would be to find a good syntax for the meta programming language and write an implementation. I think this is the gist of a good way to approach the problem though.

Thursday, April 4, 2013

Easy way to factor a number or tell whether it's prime

If T is the number you want to factor or know whether it is prime you can repeat this process recursively down until every factor is prime. First find s^2 the largest perfect square less than T. Then check whether this formula:

evaluates to a natural number for any natural number from 1 to s-2. If it does the number is composite and is (s-a)*(s+f(a)). If it doesn't the number is prime.

For example the number 32 with everything plugged in:

For example the number 32 with everything plugged in:

You start with 1 and that evaluates to an integer: 3 so you know that 32=(5-1)*(5+3) or 4*8. We might have had to try 2 and 3 but didn't have to in this case.

But if you try 31...

This is never a natural number for n = {1...3} so 31 must be prime.

*** Todo write out the proof I have it in my head but am trying to find the best way to say it**

Tuesday, April 2, 2013

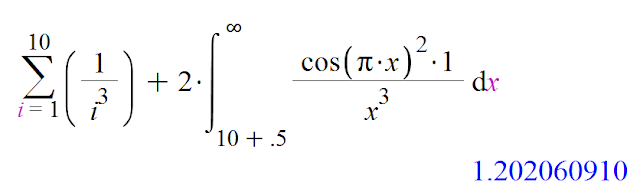

Calculating the tail of the Zeta function

The well known Zeta function is this:

The integral on the right actually has a definite form (for each value k):

where the Ci function is:

And the gamma constant is:

I found this function:

as A get's larger this function get's closer and closer to the true value. For instance:

whereas the sum on the left by itself is only:

So the integral appears to be adding very close to the right amount.

and making A larger...

where the Ci function is:

And the gamma constant is:

Now back to the original formula I found:

There is a simple reason why it works... the integral of cos(pi*x)^2 from an integer minus .5 to that integer plus .5 gets closer and closer to 1/2 for larger integers. So a function you multiply it by is made like a discrete sum over the integers divided by 2. It is actually a handy way to convert from a discrete sum to an integral that I'll probably go more into in the future.

The example for k=3...

So the integral is adding something around .02 and getting a lot of decimal places correct.

A faster approximation of e

Of course there's this approximation of e:

But the 5 has to be a large number to get very close to e, this next one is faster:

**Update**

Actually my professor Dr. Rose recalled a paper he had read by Harlan Brothers and John Knox that discussed different faster approximation's for e and it turns out an improved version of this one I found was found by Harlan Brothers here: http://www.brotherstechnology.com/math/e-formulas.html Formula 25 results from what he calls compressing the series I have here.

But the 5 has to be a large number to get very close to e, this next one is faster:

But I found this one that is even faster for just a little more arithmetic:

This one is 3 more digits correct for n=5.

I looked online for a list of many different possible power series for e but the first two I listed here are the only ones I found so maybe this one is new. I thought of it as kind of a combination of the first two and it seems to work.

***

A proof of why it converges faster is that the terms inside the parenthesis are actually the Taylor series for the 5th root of e^x centered around x = 0 evaluated at x=1. And since the terms of that series are getting smaller so much faster than the Taylor series for e^x centered around 0 (because of the 5^i terms in the denominators , less terms must converge to it's limit faster. Then you raise it to the 5th power to get e.

The fives in the denominator of the terms in the parenthesis can be increased to any number as long as the exponent on the parenthesis matches, or the series in the parenthesis can be lengthened and still raised to the same power on the parenthesized expression. So since there are two ways to go I'm not sure which is easier to calculate or whether there is a difference in the rate of convergence but maybe I'll look more into it at some point.

The two classical approaches to calculating e that I first listed can thus both be thought of as special cases of the formula I've found. The first uses the first two terms of the nth root series raised to the nth power, the second uses the first root equation to the power of 1.**Update**

Actually my professor Dr. Rose recalled a paper he had read by Harlan Brothers and John Knox that discussed different faster approximation's for e and it turns out an improved version of this one I found was found by Harlan Brothers here: http://www.brotherstechnology.com/math/e-formulas.html Formula 25 results from what he calls compressing the series I have here.

Subscribe to:

Posts (Atom)